|

|

||

|---|---|---|

| .. | ||

| cpp | ||

| example | ||

| fast_evaluator | ||

| fast_evaluator_radar | ||

| matlab | ||

| python | ||

| sample_data | ||

| README.md | ||

README.md

Scan Context

NEWS (Nov, 2020): integrated with LIO-SAM

- A Scan Context integration for LIO-SAM, named SC-LIO-SAM (link), is also released.

NEWS (Oct, 2020): Radar Scan Context

- An evaluation code for radar place recognition (a.k.a. Radar Scan Context) is uploaded.

- please see the fast_evaluator_radar directory.

NEWS (April, 2020): C++ implementation

- C++ implementation released!

- See the directory

cpp/module/Scancontext - Features

- Light-weight: a single header and cpp file named "Scancontext.h" and "Scancontext.cpp"

- Our module has KDtree and we used nanoflann. nanoflann is an also single-header-program and that file is in our directory.

- Easy to use: A user just remembers and uses only two API functions;

makeAndSaveScancontextAndKeysanddetectLoopClosureID. - Fast: tested the loop detector runs at 10-15Hz (for 20 x 60 size, 10 candidates)

- Light-weight: a single header and cpp file named "Scancontext.h" and "Scancontext.cpp"

- Example: Real-time LiDAR SLAM

- We integrated the C++ implementation within the recent popular LiDAR odometry code, LeGO-LOAM .

- That is, LiDAR SLAM = LiDAR Odometry (LeGO-LOAM) + Loop detection (Scan Context) and closure (GTSAM)

- For details, see

cpp/example/lidar_slamor refer this repository (SC-LeGO-LOAM).

- See the directory

- Scan Context is a global descriptor for LiDAR point cloud, which is proposed in this paper and details are easily summarized in this video .

@INPROCEEDINGS { gkim-2018-iros,

author = {Kim, Giseop and Kim, Ayoung},

title = { Scan Context: Egocentric Spatial Descriptor for Place Recognition within {3D} Point Cloud Map },

booktitle = { Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems },

year = { 2018 },

month = { Oct. },

address = { Madrid }

}

- This point cloud descriptor is used for place retrieval problem such as place recognition and long-term localization.

What is Scan Context?

- Scan Context is a global descriptor for LiDAR point cloud, which is especially designed for a sparse and noisy point cloud acquired in outdoor environment.

- It encodes egocentric visible information as below:

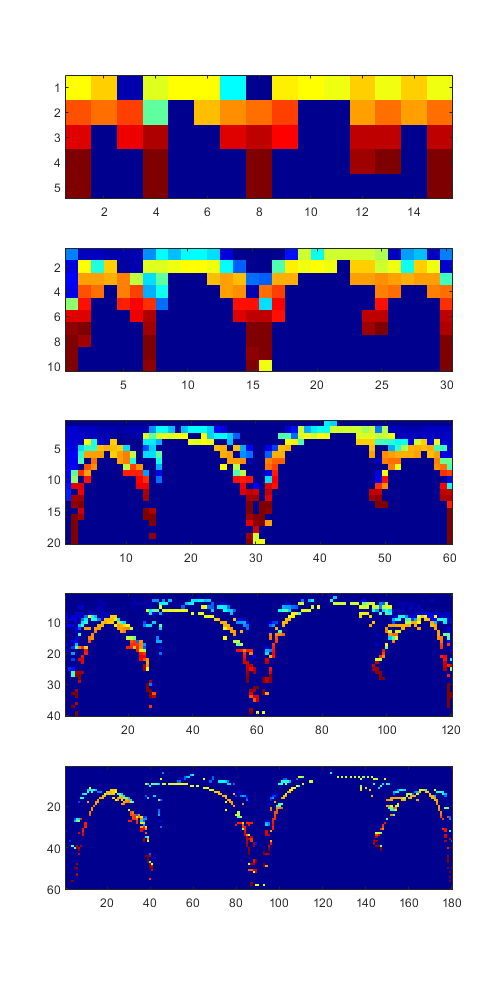

- A user can vary the resolution of a Scan Context. Below is the example of Scan Contexts' various resolutions for the same point cloud.

How to use?: example cases

- The structure of this repository is composed of 3 example use cases.

- Most of the codes are written in Matlab.

- A directory matlab contains main functions including Scan Context generation and the distance function.

- A directory example contains a full example code for a few applications. We provide a total 3 examples.

-

basics contains a literally basic codes such as generation and can be a start point to understand Scan Context.

-

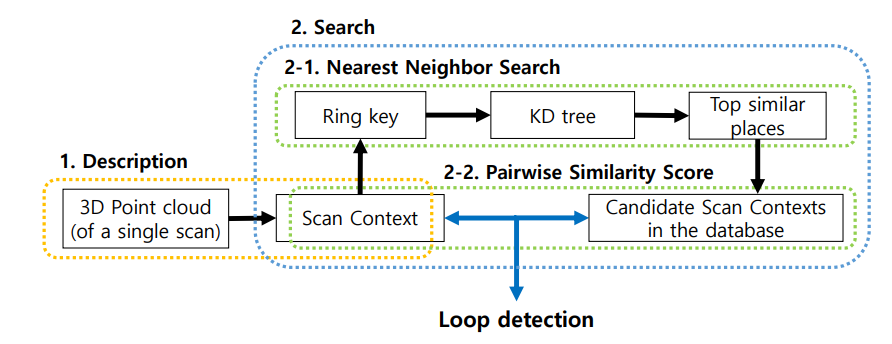

place recognition is an example directory for our IROS18 paper. The example is conducted using KITTI sequence 00 and PlaceRecognizer.m is the main code. You can easily grasp the full pipeline of Scan Context-based place recognition via watching and following the PlaceRecognizer.m code. Our Scan Context-based place recognition system consists of two steps; description and search. The search step is then composed of two hierarchical stages (1. ring key-based KD tree for fast candidate proposal, 2. candidate to query pairwise comparison-based nearest search). We note that our coarse yaw aligning-based pairwise distance enables reverse-revisit detection well, unlike others. The pipeline is below.

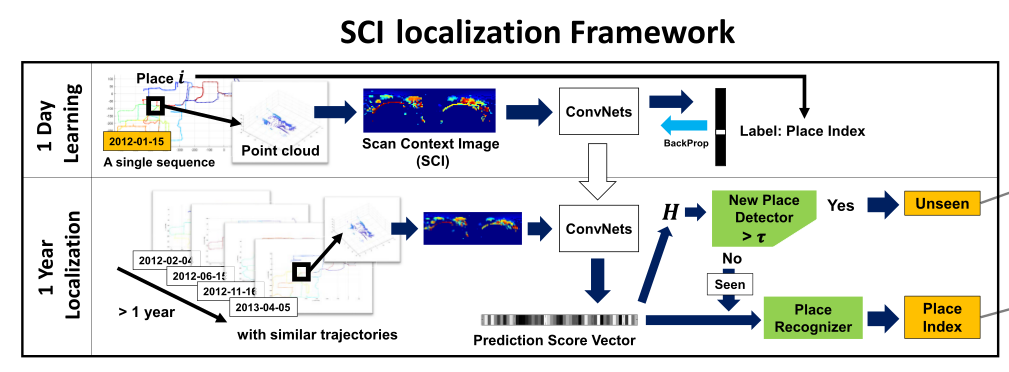

- long-term localization is an example directory for our RAL19 paper. For the separation of mapping and localization, there are separated train and test steps. The main training and test codes are written in python and Keras, only excluding data generation and performance evaluation codes (they are written in Matlab), and those python codes are provided using jupyter notebook. We note that some path may not directly work for your environment but the evaluation codes (e.g., makeDataForPRcurveForSCIresult.m) will help you understand how this classification-based SCI-localization system works. The figure below depicts our long-term localization pipeline.

More details of our long-term localization pipeline is found in the below paper and we also recommend you to watch this video .

@ARTICLE{ gkim-2019-ral,

author = {G. {Kim} and B. {Park} and A. {Kim}},

journal = {IEEE Robotics and Automation Letters},

title = {1-Day Learning, 1-Year Localization: Long-Term LiDAR Localization Using Scan Context Image},

year = {2019},

volume = {4},

number = {2},

pages = {1948-1955},

month = {April}

}

- SLAM directory contains the practical use case of Scan Context for SLAM pipeline. The details are maintained in the related other repository PyICP SLAM; the full-python LiDAR SLAM codes using Scan Context as a loop detector.

Acknowledgment

This work is supported by the Korea Agency for Infrastructure Technology Advancement (KAIA) grant funded by the Ministry of Land, Infrastructure and Transport of Korea (19CTAP-C142170-02), and [High-Definition Map Based Precise Vehicle Localization Using Cameras and LIDARs] project funded by NAVER LABS Corporation.

Contact

If you have any questions, contact here please

paulgkim@kaist.ac.kr

License

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Copyright

- All codes on this page are copyrighted by KAIST and Naver Labs and published under the Creative Commons Attribution-NonCommercial-ShareAlike 4.0 License. You must attribute the work in the manner specified by the author. You may not use the work for commercial purposes, and you may only distribute the resulting work under the same license if you alter, transform, or create the work.